Since the dawn of cinema, the cut has been one of the most powerful tools in a director’s kit. If we see a man walk through a door and turn his head to the right, and the scene immediately cuts to an image of an apple on a side table, our brain fills in the gap, and we understand that this man is looking at the apple.

That’s because the brain has a natural propensity for smoothing over interruptions of stimuli. Whenever we blink, our eyes close for up to half a second, but we don’t notice the breaks. We also make rapid eye movements called saccades several times a second as we adjust to a constantly shifting environment, and we lose access to visual information until the eye movement settles down. This may why we generally don’t notice cuts in movies—they work like saccades.

But neuroscientist Sergei Gepshtein dreams of a new visual vocabulary for cinema—one that relies much less on the cut, or perhaps even eliminates the cut altogether. “The film industry rests on a narrow selection of possibilities that got discovered early on and then got canonized by the force of inertia and entrenched by filmmaking technology and habit,” he says.

The potential applications of Gepshtein’s research range widely, from theater and video games to highway signage and building design.

Gepshtein sees some of the most disagreeable traits of entrenched movie technology in today’s blockbuster action movies. In these films, shots last only seconds, and there are regular barrages of rapid-fire cuts. Think Transformers, Battleship, the Bourne trilogy, or Pacific Rim. As Scott Derrickson, director of recent thrillers like Sinister and The Day the Earth Stood Still, laments, “The story is happening to you, but you are not interacting with the story.”

But Gepshtein thinks he can offer an alternative to this trend—and it doesn’t necessarily involve long takes in the style of directors like Alfonso Cuarón, who recently snagged a directing Oscar for Gravity. Instead, it involves harnessing the modern science of vision.

In December, I paid a visit to Gepshtein at his workplace, the Systems Neurobiology Laboratories of the Salk Institute in La Jolla, California, its sleek white facade optimally designed to provide stunning ocean views from nearly every angle. Gepshtein, a slim man with a neatly trimmed beard and wire-frame glasses, met me at the front desk, wearing jeans, a blazer, and a natty beret, which made him look a bit like an auteur director himself. We made our way to his tiny office (space at Salk is at a premium), which was filled with piles of papers and video monitors of every conceivable shape and size.

Over the course of the next few hours, Gepshtein walked me through the historical background to his current research in painstaking detail. Context is everything, and for Gepshtein, everything is context. His conversation was peppered with references to Salvador Dalí, Venetian architecture, Gestalt psychology, optical illusions, philosophy, the novels of Stanislaw Lem and Italo Calvino, and films.

Gepshtein expressed frustration with what he sees as a complacency among filmmakers when it comes to cinematic vocabulary. “They don’t feel handicapped in any way,” he told me. “They don’t know that they sit in the corner of a parameter space.”

By “parameter,” Gepshtein means the parameters of human perception, the focus of his work. Technically, Gepshtein is a vision scientist; he studies what we see. Increasingly, however, he has become fascinated by the ways in which we don’t see. At the heart of his work is something called the window of visibility. This might be defined as the range of what’s in front of our eyes that our brains can actually perceive. Vision is selective: The human eye receives far more visual stimulus than the brain can register.

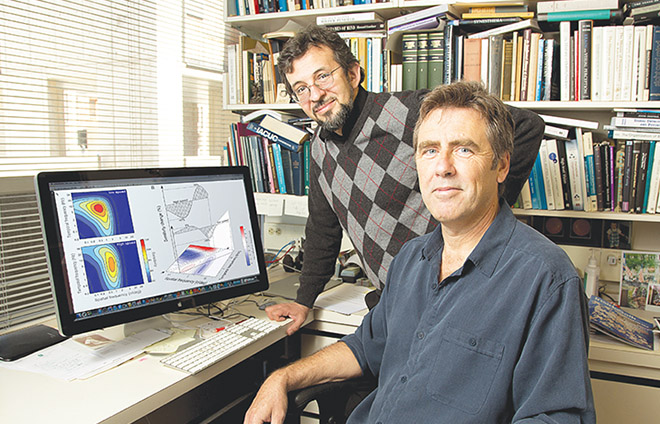

Lend Me Your Mind: Sergei Gepshtein (left), pictured with his colleague Thomas D. Albright, has big plans for movies. (Photo: Salk Institute for Biological Studies)

As Gepshtein explains, movements that are too fast or too slow won’t make it past the brain’s filter. We know the hour hand of a clock moves, but it moves too slowly for us to perceive any motion with our eyes. We also know that each individual spoke of a spinning wheel is sending information to our eyes, but all we see is a blur.

To determine the dimensions of my own window of visibility, I entered a room even smaller than Gepshtein’s office. There, with the lights turned out, I sat at a desk and peered at a computer monitor on which an image composed of alternating light and dark bands—a tool known as a luminance grating, which dates back to vision research in the 1960s—scrolled past my field of vision, constantly varying in speed, frequency, and degree of contrast.

My instructions were to press one button if the scrolling image seemed to be moving upward and another if it seemed to be moving downward. It started easily, but I soon found myself unable to tell in what direction the gradient was moving. Sometimes it was because the lines had grown too faint; other times because they were moving too quickly or too slowly. It was frustrating—who likes to fail?—but illuminating.

Gepshtein and other scientists have compiled the data from tests like the one I took, and the results are remarkably clear. There is only slight variation in the limits of the window of visibility from person to person. The core data is so consistent that it can be thought of as a law of perception.

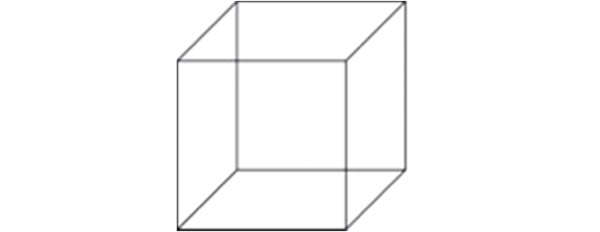

Determining the boundaries of the window of visibility has been an exciting leap forward in understanding the limits of visual perception. But to make this knowledge useful to filmmakers, Gepshtein has been enhancing it with other findings from vision science. If the window of visibility is like the outline of a continent on a map, the other findings are like additional geographical information—the location of mountains, rivers, cities. The most important of them have to do with how our brain organizes the things that fall within our window of visibility. To see an example, consider the crisscrossing lines below:

Objectively, they are just lines, and they fall easily into your window of visibility. But your brain has organized them into a cube shape. What’s more, your brain has picked one of two ways of looking at the cube. If it has chosen to see it as if you’re looking from above, you’ll effectively see it with the following orientation:

But if your brain has chosen to see it as if you’re looking from below, you’ll effectively see it with this orientation:

Either orientation can be discerned in the original image, but the brain cannot see both at once. It will pick one. That is an example of perceptual organization, something we all engage in without realizing it.

If the ability to take advantage of such quirks of visual perception could be incorporated into the standard filmmaking software tools, it would put the power of perceptual organization into the hands of the director. In principle, filmmakers could manipulate individual elements onscreen to bring them in and out of the viewer’s conscious perception, with no need for cuts. “Parts of an image that are continuously present on the screen can be suppressed from the perceiver’s mind and then brought out at will, just like a magician’s rabbit pulled out of the hat,” Gepshtein anticipates. “In effect, one scene may emerge in the middle of the other without cuts, and without the artificial tools of image morphing or dissolves.”

This is hard to envision, and even Gepshtein has a hard time describing the future cinematic experience. But what if, instead of cutting to a new scene, the filmmaker swapped out one setting for another without us even noticing? Techniques reminiscent of this have already been used—albeit not with the subconscious seamlessness envisioned by Gepshtein—in movies like Speed Racer, from 2008.

Gepshtein believes the cinema of the future might even be a shared, immersive experience, one in which the events seem to unfold all around the viewer. “You could enter it like architecture, and there could be other people in the same space,” he says.

Because the science of vision is relevant to so many things, the potential applications of Gepshtein’s research range widely, from theater and virtual world-building in video games to highway signage, advertising displays, and building design. Architects are especially interested in researching the effect of their structures on human perception—something they’d like to know before they start building—so Gepshtein has received a small seed grant from the Academy of Neuroscience for Architecture, an organization that links neuroscience research to how we respond to the built environment.

Still, Gepshtein hopes especially to apply his maps of perception to film. For now, he recognizes that conveying his vision to working directors is going to be a challenge—“We don’t have a language for this,” he notes—but he believes a pilot visualization of the project would help overcome this barrier, and he is looking for funding to create a short demo reel.

Derrickson, the director, says he would love to see such a demonstration. “Somebody is going to make a movie at some point that does employ a new language,” he says. “I just can’t imagine what that would be, unless neuroscience brings in a new methodology of perception that somehow transcends our own way of looking at the world.”

That’s precisely what Sergei Gepshtein has in mind.

This post originally appeared in the May/June 2014 issue ofPacific Standardas “The Cut Stays Out of the Picture.” For more, subscribe to our print magazine.